What is a Production Ops Agent?

Somewhere in your company right now, an engineer is staring at a Slack channel full of alerts, trying to decide which ones are real. Another is twenty-three minutes into a war room, bouncing between Datadog, CloudWatch, a Prometheus tab, a runbook that's eight months out of date, and a postmortem from a similar incident last quarter that might as well not exist. A third just learned from a customer that the checkout service has been broken for forty minutes.

This is production ops in 2026. According to the people living in it, it isn't working, and most of the industry has quietly decided to accept that.

We surveyed more than 1,000 SRE, DevOps, and IT operations professionals for our 2026 State of Production Reliability and AI Adoption Report. The numbers are blunt:

- 53% of engineering teams spend 40% or more of their time on incident management instead of building.

- 44% of organizations had an outage in the past year tied to a suppressed or ignored alert.

- 78% had at least one incident where no alert fired at all, meaning customers became the monitoring system.

- 83% juggle four or more tools during a live incident; 41% juggle seven or more.

Read those numbers closely. Only the first is really about speed. The other three are about the same underlying thing: incidents that shouldn't have happened, or that were detectable earlier than they were detected. Nearly half of outages traced to an alert that existed and was suppressed. Almost eight in ten with no alert at all. Those aren't response-time problems. They're prevention problems.

The industry's answer to production operations for the last decade has been respond faster. Faster alerting. Faster paging. Faster war rooms. Faster postmortems. More tools, more dashboards, more automation around the moment the pager goes off.

It hasn't worked. It was never going to. You can't out-respond a system that's fundamentally reactive.

A Production Ops Agent is a different category of answer. Not another dashboard. Not a chatbot bolted onto a ticketing system. Not a copilot that waits for you to ask it something. It's an AI teammate that shows up on its own, does the work that used to consume your best engineers, and hands back a verdict you can act on, often before the incident has had a chance to become one.

The category shift is simple: not faster response. Less response.

What does a Production Ops Agent actually do?

Three things. And while every vendor in this space talks about the second one, the first is where the posture shift actually lives.

1. Prevent

Catch the incident before it becomes an incident.

A seasoned engineer doing a morning walk-through spots the things the alerts miss. Config drift on a production cluster. Memory pressure climbing on a node that's a few hours from an OOM. A backup job that silently failed last night and nobody noticed. A cost anomaly headed for next month's bill. Latency creeping up on a dependency nobody's watching because it's not in your own org chart.

Nothing has broken yet. Nothing will alert. But an hour from now, or a day, or a week, something will. And by then the options are worse, the blast radius is bigger, and your best engineer is in a war room instead of shipping.

A Production Ops Agent does that walk-through every six hours. Every pillar, every service, every cluster. Not sampled. Surveyed. It catches the degradation before it breaks. It catches the silent failure before you learn about it from a customer. It catches the drift before it becomes an outage.

Early deployments surface degradation hours ahead of failure, with alert-noise reduction approaching 90% because the conditions that would have generated the noisy cascade got handled upstream. Roughly one in five would-be incidents never makes it to the alerting layer at all.

What changes when prevention is the norm isn't just uptime. It's the shape of your week. Your senior engineers stop being on-call lottery winners and start being engineers again. The 2 AM page becomes genuinely rare, not nominally rare. The postmortem backlog shrinks because the incidents requiring postmortems stopped happening. The roadmap stops slipping a sprint every time something lights up in production.

This is the posture shift. Everything else is a consequence of it.

2. Resolve

When something does break, and something will, do the work that used to take a war room.

Triage the alert storm down to the signal. Map the blast radius. Pull the right slice of telemetry across every tool. Form hypotheses, rule them out, converge on a cause. Draft the remediation. Keep a human in the loop on the actions that matter.

The industry benchmark is unforgiving. Our survey found that 61% of organizations estimate an hour of downtime costs $50,000 or more, and 34% put that figure at $100,000 or more per hour.

Meanwhile, 93% of teams pull three or more engineers into a major incident, often six to ten for a P0. Every minute shaved off MTTR, and every engineer kept out of the war room, compounds. A Production Ops Agent that produces a first-verdict RCA in two to five minutes with 94% investigation accuracy isn't a nice-to-have when you're paying $100K an hour for the status quo.

But notice the framing. Resolve matters because the incidents you can't prevent still need to be handled well. It's a critical capability. It isn't the prize.

3. Optimize

The work that never makes it onto the sprint.

Right-sizing infrastructure. Finding the unused EBS volumes. Surfacing observability gaps. Fixing the runbook your senior SRE complained about for a year but never had ninety minutes to rewrite.

Reclaiming the toil that's been chewing on your team's calendar since the platform was first stood up.

This is where a Production Ops Agent quietly returns more engineering hours to your team than anywhere else. It's also where the prevention loop gets sharper, because the gaps surfaced here are the blind spots that tomorrow's outages would have exploited.

Meet the personalities

Here's where it gets more interesting. A Production Ops Agent isn't a single monolithic brain that does everything. It's a team: a set of specialized agent personas, each with its own area of expertise, all operating on the same shared context layer.

Think of them as the reliability team you wish you had on staff: available at any hour, reasoning in parallel, equally engaged whether there's an incident in flight or just a morning walk-through to run.

The SRE. The pattern-matcher. Has seen this failure mode before, maybe not on your system, but on a thousand others. Thinks in symptoms, causes, and cascades. First into the investigation, usually the one who frames the right question. When an alert fires, the SRE is the one asking "is this actually a problem, or is this Tuesday?" When nothing has fired, the SRE is the one asking "should something have?"

The Infrastructure specialist. The platform veteran who actually reads the Kubernetes events. Knows what a node-pressure taint looks like when it's about to bite. Recognizes the difference between a scheduling bug and a capacity issue. Can tell you whether that pod churn is cosmetic or cascading. When the investigation touches the substrate (nodes, networking, the control plane), Infrastructure steps forward. Equally likely to surface a cluster problem during a routine sweep as during a page.

The APM specialist. Lives in the application tier. Latency distributions, error rates, trace spans, the shape of a p99 curve during a slow-burn degradation. Can tell whether a service is slow because it's genuinely slow or because its upstream just got noisy. When the problem is "the app feels wrong," or when it's going to feel wrong in a few hours, APM goes to find out why.

The Storage specialist. The quiet one who notices something's off at the data layer long before anyone else. IOPS, throughput, replication lag, capacity curves, backup integrity. The kind of expertise that usually lives in one person's head on your team, and goes missing the week you need it most.

The Security specialist. The cautious one. Sees anomalous authentication patterns, unexpected egress, configuration changes that shouldn't have happened. Operates with a different threat model than the rest of the team, assuming the worst until the evidence clears. When an incident has any signal that it isn't just an outage, Security is already in the room.

Your custom personas. The ones that matter most, because they encode the expertise that only lives in your team. How month-end close actually works. Which service owner owns what on weekends. Why the inventory pipeline is allowed to lag fifteen minutes but never thirty. This is where FalconClaw comes in: NeuBird's enterprise-grade skills hub turns your team's tribal knowledge into validated, reusable skills that the agent picks up and acts on automatically. Fifteen minutes of markdown becomes a new persona.

Every skill created survives team changes, organizational shifts, and the 3 AM incident where the one person who's seen this before is on vacation.

These personas don't each rebuild their own context. They share the same enriched substrate. Which brings us to the part that makes it actually work.

How it works (the short version)

Under every persona is the same foundation: the Agent Context Platform. It unifies every telemetry source your environment produces (metrics, logs, traces, alerts, events, configuration, deployment history, change logs) into a queryable, typed surface the agent can join across sources, filter programmatically, and correlate at query time. Not a static copy. A live view.

On top of that substrate sits the Agent Context Engine (ACE), the reasoning core behind every investigation, prediction, and optimization. ACE doesn't retrieve answers from a pre-built index. It reasons over dynamically assembled context, tracing causal chains across services and time and showing its evidence at every step. When the Production Ops Agent tells you the root cause, it shows you why: a complete chain of evidence, not a probable guess.

Continuous context enrichment runs across four pillars:

- Dependency graphs across infrastructure, orchestration, and application layers.

- Schema annotations that make raw telemetry intelligible.

- Statistical alert intelligence that separates signal from the 70% that isn't actionable.

- Investigation memory distilled from every past incident.

When the SRE persona asks "what upstream services depend on the checkout API, and which of them deployed in the last two hours?", the answer is already there to query. The persona doesn't chase it across five tools and reconstruct it from scratch.

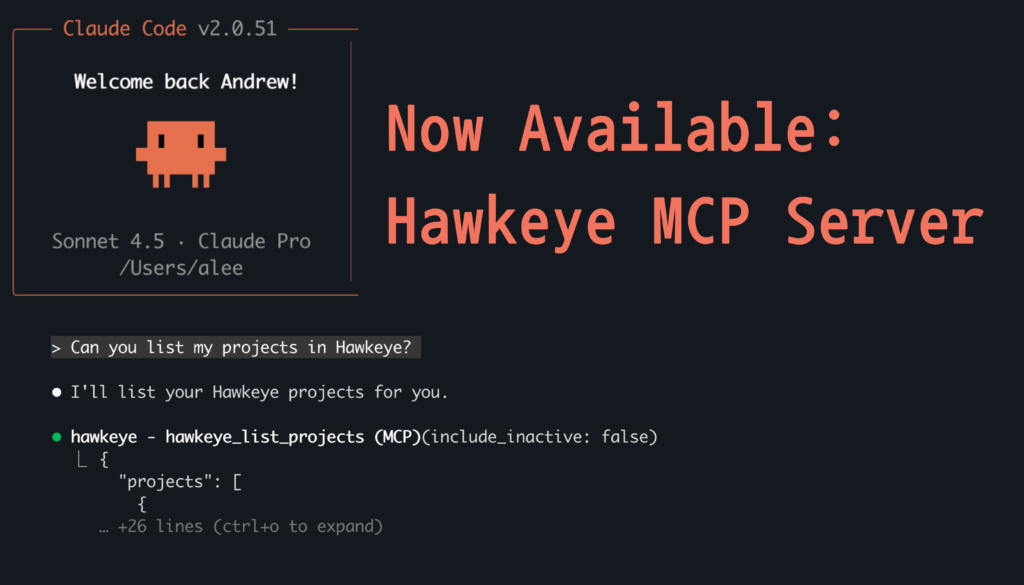

Engineers work with the agent through whichever interface fits their flow: a web app for real-time visibility, a terminal UI (NeuBird AI Desktop) for command-line investigations, and MCP integration with Cursor and Claude Code when they want the agent alongside their code.

Always-on, not on-demand

One more thing that separates a Production Ops Agent from a copilot: it doesn't wait for you to ask.

Scheduled investigations run continuously in the background. A morning health-check sweep every six hours. Backup verification at 6 AM on weekdays. Cost scans at 8 AM. Capacity forecasts at 8:35.

Performance baselines every six hours. Custom investigations on whatever cadence you need.

This is the machinery behind prevention. A copilot can only help when you remember to ask it. A Production Ops Agent asks its own questions, on a schedule, against every surface. Every run enriches the context engine for the next one. The walk-through a senior engineer would do if they had the time to do it six times a day now happens six times a day, forever, across every part of the stack.

Every investigation makes the next one smarter. Your team walks in at 9 AM to a summary of what happened overnight, what needs attention, and what's already been handled.

Engineers we've worked with describe the shift the same way every time: "I used to start my day in the alert queue. Now I start it reading a briefing."

That sentence is the whole thing, stated plainly.

The gap

One stat from our survey stuck with us more than the rest. 74% of C-suite leaders say their organization actively uses AI for incident management. Only 39% of practitioners agree.

Thirty-five points of disagreement on a yes/no question is a lot to explain away.

Here's what we think is going on. Both sides are telling the truth.

Leadership is right that AI has shipped. Every major vendor in the incident management and observability space has a generative AI feature in production: copilots that summarize incidents, agents that investigate alerts, natural-language querying over telemetry, AI-drafted postmortems. The product catalogs are full of them. The launch announcements are impressive.

Practitioners are right that it isn't working. Not yet, not the way the slide decks promise. And the industry is starting to say this out loud. ClickHouse's engineering team wrote earlier this year that most AI SRE implementations have been disappointing: thin layers on top of older observability platforms, inheriting their retention windows, their blind spots, and their data boundaries. Thoughtworks, in their 2025 AIOps retrospective, landed on the same diagnosis in plainer terms: AIOps performance is bounded not by model intelligence, but by context availability. Even the incumbents have begun conceding the point in their own launch copy. The New Relic SRE Agent announcement opens by acknowledging that general-purpose AI tools lack awareness of service ownership, dependency topology, and reliability constraints.

Translation: you can't reason over a production environment you can only see through one window. A copilot bolted onto an APM tool knows what the APM tool knows. An agent inside an incident manager knows what the incident manager knows. Neither of them can answer "what upstream services depend on checkout, which of them deployed in the last two hours, and which is holding a stale config?" That question crosses four tools and three data shapes, and none of the incumbents' AI ever saw more than one of them.

That's what the 35-point gap actually measures. Leadership counts the features that are shipped. Practitioners count the investigations those features couldn't finish, and the incidents that should have been caught upstream but weren't, because no AI sitting inside a single tool has the breadth to catch them.

Closing the gap is a data engineering problem before it's an agent problem. You have to make the production substrate legible first, across every tool, not one at a time. That's what the Agent Context Platform does.

The next decade

The last decade of production operations was measured in how fast teams could respond. The next decade will be measured in how rarely they need to.

The teams that win it won't be the ones who respond fastest. They'll be the ones whose systems don't need to respond as often, because the incidents got caught upstream, at 3 AM, by an agent doing the walk-through no human had the hours to do. Everything else (the faster resolution, the hours returned, the postmortem backlog shrinking) follows from that shift.

Want to see it run in your environment? Book a live demo →

Frequently Asked Questions

Written by

Francois Martel

Field CTO

Related Articles

The Incident That No Alert Caught: 78% of Teams Have Outgrown Their Monitoring Stack

Skills Are Going Viral Across AI Agents – Here’s How We Built an Enterprise Hub for Production Ops

Introducing FalconClaw: curated, secure, and compatible with 31,000+ OpenClaw community skills. The most capable AI agents today — coding assistants,…